A Review on Facial Emotion Recognition to Investigate Micro-Expressions

| ✅ Paper Type: Free Essay | ✅ Subject: Computer Science |

| ✅ Wordcount: 2261 words | ✅ Published: 18 May 2020 |

A Review on Facial Emotion Recognition to Investigate Micro-Expressions

ABSTRACT: Humans interact with each other using ways like speaking, body gesturing, and facial emotions displaying. Among these methods emotion displaying plays an important role. Because to express feelings or emotions, the most natural and effective way is facial expressions. Compared to the normal facial expressions, micro-expressions can reveal most of hidden emotions. But it is more challenging to find micro-expressions due to their shorter duration. Therefore over the past years, interest in micro-expression subject has increased deliberately in many field such as security, psychology, computer science and in other more areas. The purpose of this paper is to make a brief summarize about past invented methodologies to identify human micro-emotions. And also we review datasets, features and the performance metrics deployed in the literature.

Keywords: Micro-expressions, feature extraction, deep learning

1. INTRODUCTION

Facial expression research has a long history and accelerated through the 1970s. The modern theory on basic emotions by Ekman et al has generated more research than only other in the psychology of emotion [1]. Due to the short period and subtle change, it is difficult to capture correct emotion using human senses. In order to investigate these involuntary expressions, Ekman and his team developed a training tool and which is used to train people to recognize micro-expressions effectively. Once they examined a film taken of a psychiatric patient, who later confessed that she lied to her doctor to conceal her plan to commit suicide. Although the patient seemed to be happy throughout the video, when the video was examined frame by frame, a hidden look of anguish was found which lasted for only two frames, around 0.08 s [2]. This a good example that shows the prominence of recognizing micro-expressions.

Through extensive studies, Ekman and Friesen has outlined 7 universal facial expressions; happiness, sadness, anger, fear, surprise, disgust, and contempt. When an emotional event in a video captured, there is an opportunity to detect one or more of these expressions of emotions in a single frame. Though macro-expressions or else normal facial expressions are noticeable, micro-expressions are much more rapid and they attempt to suppress original expression and hide the true emotion felt.

It is noted that to extract facial features, there are two common methods, namely; geometric feature based methods and appearance based methods [3]. In geometric methods, face and location of facial components are extracted to a feature vector. Simply it says landmarks detection in face geometry. In appearance based methods, image filters are applied to either the whole face or particular face region such as eyes, mouth to extract the appearance changes.

This paper present a comprehensive study on the datasets and mythologies for micro-expression recognition. The paper is organized as follows: Section 2 provides a review on existing datasets. Section 3 describes feature extraction ways. Section 4 presents emotion classification and Section 5 concludes this paper.

2. Facial Micro-Expression Datasets

Datasets can be mainly classified into two sections; Spontaneous datasets and Non-spontaneous datasets.

2.1. Spontaneous datasets

To get accurate dataset, it is necessary to capture true emotions while the person try to hide it rather than using fake emotions which created by actors. Though it is a considerable challenge to elicit micro-expressions spontaneously, there are some available spontaneous datasets namely; Spontaneous Micro-expression Corpus (SMIC), Chinese Academy of Sciences Micro-Expressions (CASME), Chinese Academy of Sciences Micro-Expressions II (CASME II), A Dataset of Spontaneous Macro-Expressions and Micro-Expressions (CAS(ME)2), and Spontaneous Actions and Micro-Movements (SAMM). Table 1 shows characteristics of each and every datasets. CASME II and SAMM become the focus of the researches as they well equipped with emotion classes, high frame rate and varies in term of the intensity for facial movements [1].

Table 1: A summary of spontaneous datasets

|

Dataset |

SMIC |

CASME |

CASME II |

CAS(ME)2 |

SAMM |

|

Participants |

20 |

35 |

35 |

22 |

32 |

|

Frames per Second |

100 & 25 |

60 |

200 |

30 |

200 |

|

Resolution |

640 x 480 |

640 x 480, 1280 x 720 |

640 x 480 |

640 x 480 |

2040 x 1088 |

|

Samples |

164 |

195 |

247 |

53 |

159 |

|

Emotion Classes |

3 |

7 |

5 |

4 |

7 |

|

FACS Coded |

No |

Yes |

Yes |

No |

Yes |

|

Ethnicities |

3 |

1 |

1 |

1 |

13 |

|

Comments |

Emotions are classified into positive, negative and surprise. |

Tense and repression in addition to the basic emotions. |

Images were saved as MJPEG cropped facial area. |

This contain 250 macro-expressions. |

As a part of the experiment, each video stimuli was tailored to each participant. |

Table 2: Emotion classes of each dataset

|

Dataset |

Emotion Classes |

|

SMIC |

Positive, Negative, Surprise |

|

CASME |

Amusement, Sadness, Disgust, Surprise, Contempt, Fear , Repression ,Tense |

|

CASME II |

Happiness, Disgust, Surprise, Repression ,Others |

|

CAS(ME)2 |

Positive, Negative, Surprise, Other |

|

SAMM |

Happiness, Surprise, Anger, Contempt, Disgust, Fear, Sadness |

2.2. Non-spontaneous datasets

Three non-spontaneous datasets that were available are namely as Polikovsky Dataset, York Deception Detection Test (YorkDDT), and USF-HD.

In Polkovsky dataset, posed participants were asked to perform the 7 basic emotions with low muscle intensity and moving back to neutral as fast as possible. Compared with spontaneous datasets, this dataset had significant differences as they were not recorded naturally. USF-HD dataset also similar to the Polkovsky dataset, pose doesn’t create a real-world scenario. Above mentioned all the datasets are not publicly available for further study now. Non-spontaneous dataset summarize is present in table 3.

3. Features Extraction

Figure 1: The basic LBP operator [4]

Different techniques can be used to extract facial features.

3.1. Local Binary Pattern (LBP)

The LBP operator labels the pixels of an image by compare the value of pixel with values of its neighbors and considering the results as a binary number, and the 256-bin histogram of the LBP labels computed over a region was used as a texture descriptor. Figure 3 shows the basic LBP operator. This LBP histogram contains information about the distribution of the local micro-patterns, such as edges, spots and flat areas, over the whole image, so can be used to statistically describe image characteristics.

Table 3: A summary of non-spontaneous datasets

|

Dataset |

Polikov-sky |

YorkDDT |

USF-HD |

|

Participants |

11 |

9 |

N/A |

|

Resolution |

640 x 480 |

320 x 240 |

720 x 1280 |

|

Frames per Second |

200 |

25 |

29.7 |

|

Samples |

13 |

18 |

100 |

|

Emotion Classes |

7 |

N/A |

4 |

|

FACS Coded |

Yes |

No |

No |

|

Ethnicities |

3 |

N/A |

N/A |

Consider shape information of faces, face images were equally divided into small regions to extract LBP histograms (shown in Figure 4). The extracted feature histogram represents the local texture and global shape of face images.

Figure 2: Face image is divided into small regions to create histogram [4]

3.2. LBP-TOP

As an extension of the basic idea, Local Binary Pattern on Three Orthogonal Planes (LBP-TOP). Given a video sequence, it can be viewed as a stack of XY, XT, YT planes along time T axis, and spatial Y axis and spatial X axis respectively. The XY plane mainly reveals the spatial information, while XT and YT planes contain rich information of how pixel grayscale values transit temporally.

It is use an index code to represent the local pattern of each pixel by comparing its gray value with its neighbors’ gray values. To add information from 3D spatial-temporal domain, LBP code is extracted from the XY, XT, and YT planes of each pixel, respectively. Then one histogram of each plane is formed and then divided into a single histogram as the final LBP-TOP feature vector.

Figure 3: The texture of three planes and the corresponding histograms [5]

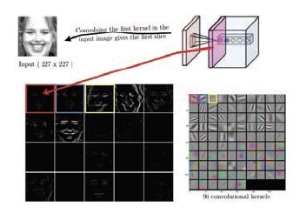

3.3. Deep Convolutional Neural Network (DCNN)

One of the earliest research describes about facial expression using DCNN method. The frontal face of the image was detected and cropped using OpenCV. Then facial features were extracted using the DCNN.

The first five convolutional layers of ImageNet were used to extract facial features. ImageNet uses eight learned layers which includes five convolutional and three fully connected layers for object detection. In this work, features are extracted using only first five layers. These layers are combination of convolution, Rectified Linear Units, Local Response Normalization and pooling operations. The features extracted after pooling operation at fifth layer are used for recognizing facial expressions. Filters were used to extract the image.

Figure 4: Output of first convolution layer applied on face image [6]

3.4. Gabor filters

Gabor filter is a linear filter used for texture analysis. Basically, it analyzes whether there are any specific frequency content in the image in specific directions in a localized region around the point or region of analysis.

These filters are similar to band-pass filters which can be used in image processing for feature extraction. The impulse response of these type of filters is created by multiplying a Gaussian envelope function with a complex oscillation.

4. Emotion Classification

Classification is the final stage of facial emotion recognition system in which the classifier categorizes the expression such as smile, sad, surprise, anger, fear, disgust and neutral.

4.1. Support Vector Machine

SVM is widely used in various pattern recognition tasks. SVM is a state-of-the-art machine learning approach based on the modern statistical learning theory. SVM can achieve a near optimum separation among classes. SVM is trained to perform facial expression classification using the features proposed. In general, SVM is the maximal hyper plane classification method that relies on results from statistical learning theory to guarantee high generalization performance.

Depending on the number of classes that the data samples are to be from, the SVM can be divided into two problems: two-class problem and multi-class problem.

4.2 k-Mean Clustering

The process of grouping a set of physical or abstract objects into classes of similar objects is called clustering. A cluster is a collection of data objects that are similar to one another within the same cluster and are dissimilar to the objects in other clusters. Clustering is also called data segmentation in some applications because clustering partitions large data sets into groups according to their similarity. The most well-known and commonly used partitioning methods are k-means.

The k-means algorithm for partitioning, where each cluster’s center is represented by the mean value of the objects in the cluster.

Input:

k: the number of clusters,

D: a data set containing n objects.

Output: A set of k clusters.

Method:

1) Arbitrarily choose k objects from D as the initial cluster centers;

2) Repeat

3) (Re) assign each object to the cluster to which the object is the most similar, based on the mean value of the objects in the cluster;

4) Update the cluster means, i.e., calculate the mean value of the objects for each cluster;

5) Until no change; [7].

4.3. Hidden Markov Model (HMM)

The Hidden Markov Model (HMM) classifier is the statistical model which categorizes the expressions into different. Hidden Conditional Random Fields (HCRF) representation is used for classification. It uses the full covariance Gaussian distribution for superior classification performance [7].

4.4. ID3 Decision Tree

ID3 Decision Tree (DT) classifier is a rule based classifier which extracts the predefined rules to produce competent rules. The predefined rules are generated from the decision tree and it was constructed by information gain metrics. The classification is performed using the least Boolean evaluation [7].

4.5 Learning Vector Quantization (LVQ)

Learning Vector Quantization (LVQ) is the unsupervised clustering algorithm which has two layers namely competitive and output layers. The competitive layer has the neurons that are known as subclasses. The neuron which is the greatest match in competitive layer then put high for the class of exacting neuron in the output layer.

4.6 KNN classifier

K-nearest neighbor algorithm is a method for classifying objects based on closest training examples in the feature space. K-nearest neighbor algorithm is among the simplest of all machine learning algorithms. In the classification process, the unlabeled query point is simply assigned to the label of its k nearest neighbors.[8]

Typically the object is classified based on the labels of its k nearest neighbors by majority vote. If k=1, the object is simply classified as the class of the object nearest to it. When there are only two classes, k must be an odd integer. However, there can still be times when k is an odd integer when performing multiclass classification. After we convert each image to a vector of fixed-length with real numbers, we used the most common distance function for KNN which is Euclidean distance [8]:

5. Conclusion

We have presented a review on datasets, feature extraction methods and classification methods for micro-expressions analysis. The ultimate goal of this paper is to provide new insights and recommendations to advancing the micro-expression analysis research. Spontaneous datasets and Non spontaneous datasets are two types of datasets available. LBP, LBP-TOP, DCNN and gabor filter methods for feature extraction have discussed in this paper. Extracted features should be classified by using a classifier. SVM, k-mean clustering, HMM, LVQ, KNN classification techniques have provided.

REFERENCES

|

[1] |

A. K. D. M. H. Y. Walied Merghani, “A Review on Facial Micro-Expressions Analysis: Datasets, Features and Metrics,” p. 19, 7 May 2018. |

|

[2] |

Q. &. S. X. &. F. X. Wu, “The Machine Knows What You Are Hiding: An Automatic Micro-expression Recognition System,” no. 0302-9743, pp. 152-162, 2011. |

|

[3] |

G. Z. X. H. W. Z. Xiohua Huang, “Spontaneous facial micro-expression analysis using Spatiotemporal Completed Local Quantized Patterns,” Neurocomputing, vol. 175, pp. 564-578, 29 January 2016. |

|

[4] |

S. G. P. W. M. Caifeng Shan, “Facial expression recognition based on Local Binary Patterns:A comprehensive study,” Image and Vision Computing, vol. 27, p. 803–816, 2009. |

|

[5] |

L. X. W. S.-J. Z. G. L. Y.-J. Yan W-J, “CASME II: An Improved Spontaneous Micro-Expression Database and the Baseline Evaluation,” 27 January 2014. |

|

[6] |

R. M. P. M. P. M. V. Mayya, “Automatic Facial Expression Recognition Using DCNN,” vol. 93, pp. 6-8, 2016. |

[7] A Survey on Human Face Expression Recognition Techniques

[8] Facial Expression Recognition Algorithm Based On KNN Classifier

Cite This Work

To export a reference to this article please select a referencing stye below:

Related Services

View allDMCA / Removal Request

If you are the original writer of this essay and no longer wish to have your work published on UKEssays.com then please click the following link to email our support team:

Request essay removal