Microsoft Surface Table: 3D Modelling and Touch Controls

| ✅ Paper Type: Free Essay | ✅ Subject: Engineering |

| ✅ Wordcount: 1611 words | ✅ Published: 30 Aug 2017 |

Background and Context

Three Dimensional (3D) Modelling is the process of creating a 3D representation of any surface or object by manipulating polygons, edges, and vertices, in simulated 3D space1. 3D modelling is used in many different industries, including virtual reality, video games, TV and motion pictures. 2A 3D modelling software generates a model through a variety of tools and approaches including:

- Simple polygons.

- 3D primitives – simple polygon-based shapes, such as pyramids, cubes, spheres, cylinders, and cones

- 3Spline curves – a curve that connects two or more defined specific control points.

- 3NURBS (non-uniform rational b-spline) – Computationally complex, smooth shapes designed by bezel curves.

Scope and Objectives

In this project, the 3D model produced, was the 5G Innovation Centre at the University of Surrey. The final version of the prototype is supposed to be a model of the whole University campus, which would be able to display the temperature, noise levels, and a few other statistics in every single area on the map. In order to record data, an “IoT Desk Egg4” was created. This Desk Egg is a multi-sensor suite with feedback mechanisms and wireless communication capabilities. Using this Desk Egg, environmental data, such as light, sound, temperature, noise, humidity, dust density are measured and recorded. Separately, these recordings have limited value, but when combined, and analysed in aggregate, the sensors provide a rich context of its immediate surroundings.

5The objective of this project is to enhance the model with touch navigation capabilities, similar to 3D navigation on a mobile phone or tablet. The current model is constructed for a Samsung SUR40 touch table, running Microsoft Surface. The model has been implemented using PixelSense and in addition, Microsoft Surface Game Studio 4.0 and the Window Presentation Foundation (WPF). To go about enhancing the model, investigations would have to be established, on what changes would be involved to implement touch navigation, before implementation and testing of the aforementioned varieties.

Introduction[SO1]

Microsoft PixelSense

6Microsoft PixelSense orientation capabilities are used and seamlessly integrated into the application, which also supports multiple simultaneous touch points. The Samsung SUR40 can only run Windows 7, as the Surface SDKs are only fully supported on Windows 7 and not any newer Operating System.

Window Presentation Foundation

To initiate an application for any Microsoft Surface devices, especially for the PixelSense, the Microsoft Surface Game Studio 4.0 and Window Presentation Foundation (WPF) are required. Rowe (2012) shows a few points that can assist a developer to innovate and create a great application for the Samsung SUR40 Surface Table.7

- Implement a darker background as it does better with quality during contact.

- Multiple screen interactions, in which, the user’s finger and objects, can be detected.

- Actions only must be interacted by the user’s fingers to avoid detection errors, and adding sound effects, acknowledging the user’s finger interaction, should be done.

- Ensuring the user immerses into an outstanding experience from the application.

- Since the PixelSense has 4 corners, it will be more convenient for users to be able to turn the orientation of the application, while offering an easy means of leaving the application.

- The points of interaction with the application, should be well sized and well-spaced, to prevent manipulation errors during input.

Working with Touch Input

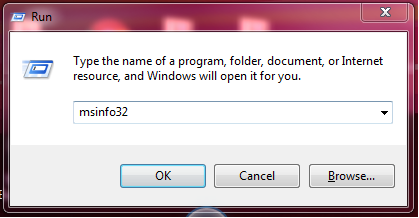

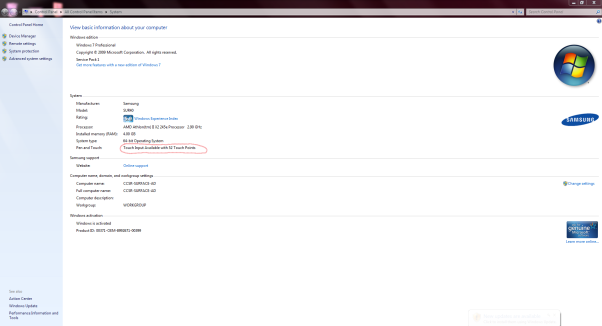

To implement the 3D touch controls, a key prerequisite for the project is the availability of touch control capability. Using the System Information app, which could be launched from the “Run” dialog box, with the syntax “msinfo32.exe”. As seen from the image below, the Samsung SUR40 Surface Table, has 52 individual touch points. This confirms that the table supports the touch interaction and the touch framework is ready to use.

Fig 1. The Run dialog, with the corresponding syntax, used to launch the system information window.

Fig 1. The Run dialog, with the corresponding syntax, used to launch the system information window.

Fig2. A System Properties window, showing the details on the touch capability.

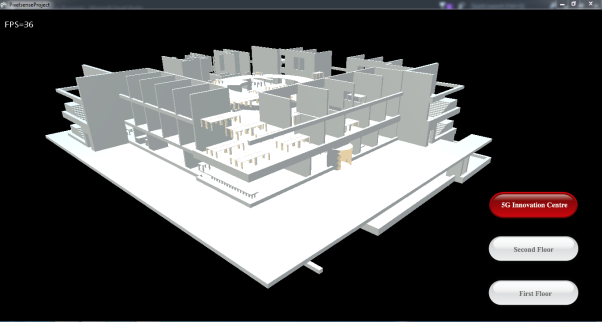

The initial edition of the 3D modelling software has sub-par touch navigation controls, but it makes up in the availability of buttons which are mapped to specific viewing points of the model. Having these buttons provides a way of navigating through, but lack the fluidity of the touch input system you would get from any other 3D modelling software.

Fig 3. An image showing the initial edition of the source code, with buttons.

Development Environment

In order to be able to work on this project, a few software programs have to be installed. These software programs are specifically required to create an application for a Microsoft PixelSense device.

These include:

- Windows 7

- Microsoft Visual Studio 2015

- Microsoft XNA Framework Redistributable 4.0 Refresh

- Microsoft XNA Game Studio Platform Tools

- Microsoft Surface 2.0 SDK

- Microsoft Surface 2.0 Runtime

- GitEXT

Windows 7

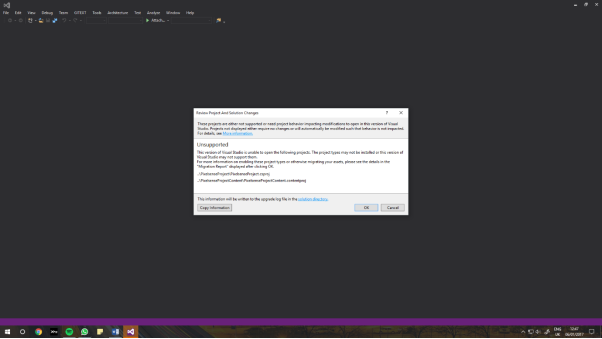

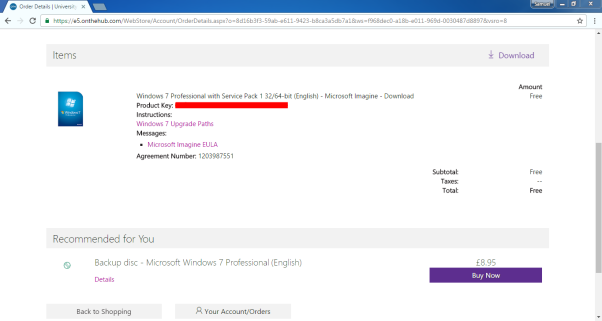

Windows 7 is the latest operating system that the Surface SDK supports, hence, all the programming done, had to be on a PC running Windows 7. Running these programs on a Windows 10 PC, gives an unfixable error. A Windows 7 license had to be purchased. Microsoft DreamSpark provides a free license to the University of Surrey students. Using the downloaded ISO file, a USB boot key was made, using “DiskPart”, so as to dual boot Windows 7 on the laptop used. Using DiskPart, empties the flash drive of all its contents, it’s advisable to use an empty drive, or back up its contents.

Fig 4. The error shown when the program is being opened on a Windows 10 PC.

Fig 5. Image showing the free purchase of a Windows 7 Operating System, using DreamSpark.

Fig 5. Image showing the free purchase of a Windows 7 Operating System, using DreamSpark.

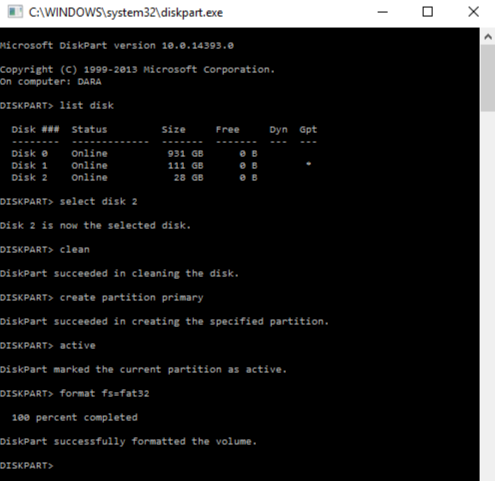

DiskPart is the Windows built in disk management program, using CMD to call it, the following syntaxes will create a custom USB key for any appropriately sized flash drive:

- “List disk” – This lists out all the disk drives connected to the system, and their sizes, with disk numbers “Disk ###”, for easier disk reference.

- After finding your particular disk, use “select disk x”, x being the respective number of your disk. This basically tells the program you plan on working on this disk.

- “Clean” – This clears your drive of previous configurations and empties it.

- “Create partition primary” – This creates a primary partition on the cleaned drive.

- “Select partition 1” – This selects the recently created partition.

- “Active” – this sets the selected partition as an active partition.

- “Format fs=fat32” – This syntax formats the flash drive to a requested file system. In this example, the file system being “FAT32”, the legacy file system recognizable by most BIOS (Basic Input Output System) firmware.

Fig 5. The image shows the procedure for creating a USB Key, Disk 2.

Fig 5. The image shows the procedure for creating a USB Key, Disk 2.

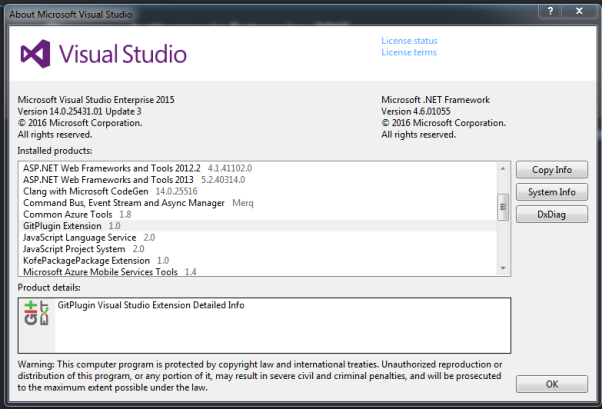

Visual Studio 2015

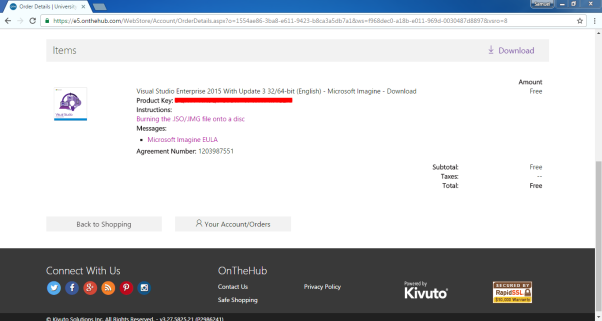

Microsoft Visual Studio 2015 is now a free software, but for the Enterprise version, a key is needed, but as a University of Surrey student, one is provided free of charge, under the DreamSpark account.

Fig 6. Image shows free purchase of Visual Studio Enterprise, using DreamSpark.

Microsoft XNA Framework and Microsoft Surface SDK

A Microsoft Surface SDK installer was downloaded and installed, as an extension, for the Visual Studio 15 suite. This enables Visual Studio to compile on the Microsoft Surface XNA Game Studio framework. The installer sets up two important frameworks, which are the Microsoft Surface WPF and Microsoft Surface XNA Game Studio 4.0. These frameworks are applied to help developers with the creation of two-dimensional (2D) and three-dimensional (3D) applications respectively. A successful install will show something like this, when creating a new project in Visual Studio.

Fig 7. The image shows a properly installed XNA Game Studio Framework.

Fig 7. The image shows a properly installed XNA Game Studio Framework.

GitEXT

GitEXT is an extension for windows, that helps manage a git repository. The program’s source code had to be worked on alongside a few colleagues, as they had other objectives in terms of updating the 3D Modelling software. Using a git repository, helps with management of different modifications and changes between different editors of the same source code.

GitEXT is an extension for windows, that helps manage a git repository. The program’s source code had to be worked on alongside a few colleagues, as they had other objectives in terms of updating the 3D Modelling software. Using a git repository, helps with management of different modifications and changes between different editors of the same source code.

Fig 8. The image shows an installed git extension for version management.

Camera Movement

Running a camera location around an object is a concept of projecting different views from different positions for audiences. It is an essential task for the developer to implement this concept in the application. In order to implement, a series of matrix calculations are required to move the camera from its current position to a desired place and display the object with high quality and performance. The current camera, as stated earlier, seen in Fig 3, has a fixed position, and does not move around the model, rather, there are fixed points in camera can be. These points are controlled by the arrow buttons.

To fix this problem the touch framework has to be implemented.

Touch Gesture

The touch functionality of the program depended on raw input, and only moved the model round a fixed point. The method of implementation made navigation with the touch screen, somewhat ineffective.

The XNA framework installed, comes with a touch input namespace namely “Microsoft.Xna.Framework.Input.Touch”. After extensive research on this library namespace, using this namespace made implementing a new gesture-based, touch framework for the code straightforward.

The namespace provides support for gestures like tapping, double tapping, horizontal drag, and a few others.

Development

After researching various libraries and namespaces for a substantial understanding of the initial source code, coding began.

During initial coding, it was discovered that the method of navigating the model, was rotating the camera round a fixed object, as it gives the same illusion as the model moving round its axes, as opposed to the manipulation of the model itself in front of an immovable camera.

Using this logic, the touch code had to manipulate the camera’s movement and not the movement of the model.

While implementing the touch framework, a few redundancies were removed, such as the buttons used in moving the camera and a few others, to provide for a more immersive experience when using the 3D model.

Fig 9. The image shows the 3D model with redundancies removed.

Cite This Work

To export a reference to this article please select a referencing stye below:

Related Services

View allDMCA / Removal Request

If you are the original writer of this essay and no longer wish to have your work published on UKEssays.com then please click the following link to email our support team:

Request essay removal